OpenAI Restricts Access to GPT-5.5 Cyber After Criticizing Anthropic’s Limited Rollout of Mythos

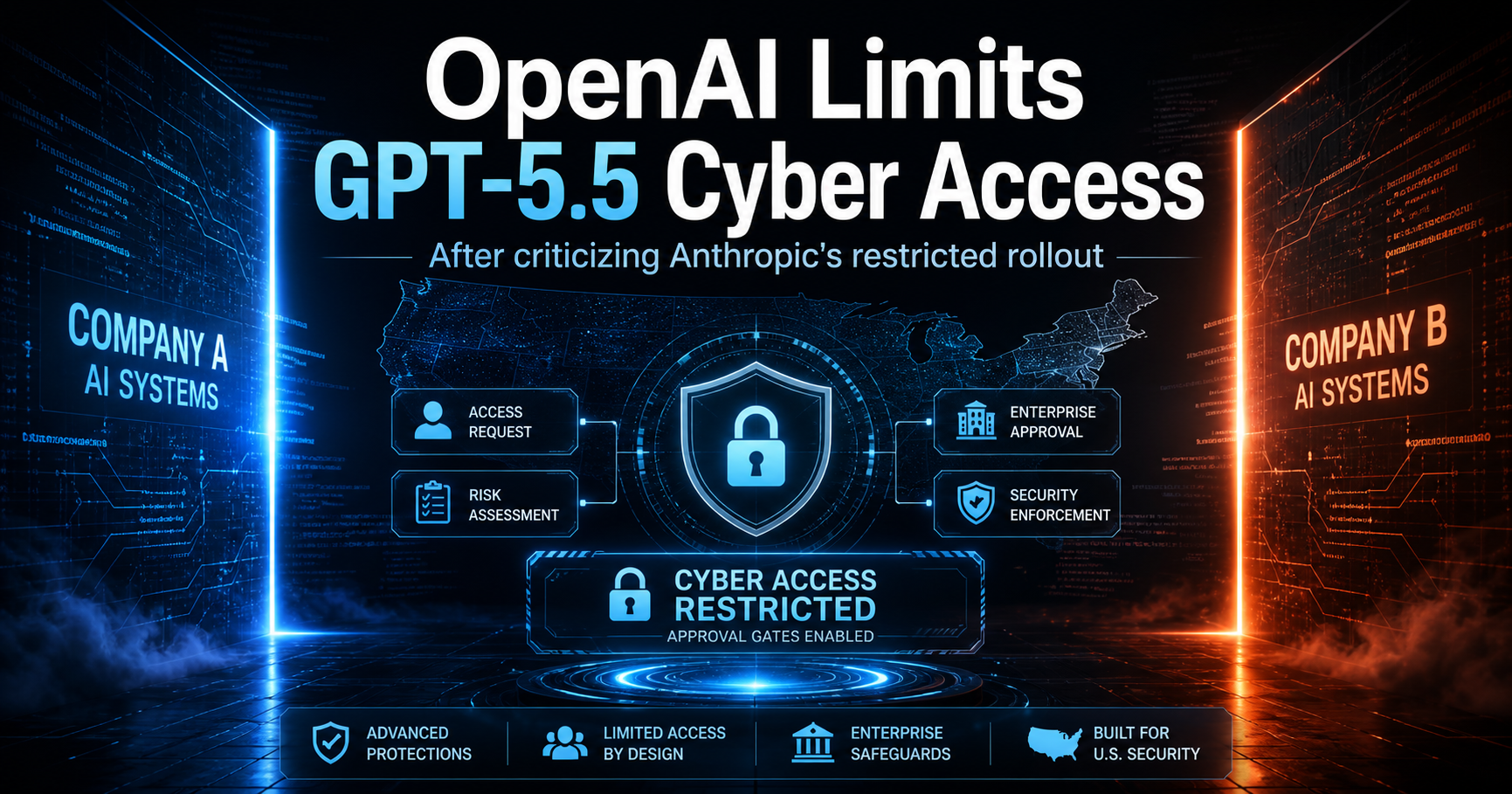

Artificial intelligence is moving deeper into cybersecurity, and the debate over who should have access to powerful AI security tools is becoming more urgent. OpenAI has confirmed that its cybersecurity-focused system, GPT-5.5 Cyber, will initially be available only to a limited group of approved users. The decision comes shortly after OpenAI CEO Sam Altman criticized Anthropic for taking a similar approach with its own cybersecurity tool, Mythos.

The situation has created a striking contrast in the AI industry. On one hand, major AI companies are racing to build advanced systems that can help defend networks, identify vulnerabilities, and strengthen digital infrastructure. On the other hand, those same tools may also introduce new risks if they fall into the wrong hands. For businesses, government agencies, and cybersecurity professionals across the United States, the issue is no longer theoretical. AI-assisted cyber defense is becoming a real part of the security landscape.

According to the information shared by Altman, OpenAI plans to roll out GPT-5.5 Cyber first to what he described as “critical cyber defenders.” This suggests that early access will likely focus on professionals and organizations involved in protecting important systems, such as enterprise networks, public infrastructure, financial institutions, healthcare systems, and government-related environments. OpenAI has also created an application process that requires people to provide information about their credentials and intended use before gaining access.

This controlled rollout indicates that OpenAI is treating GPT-5.5 Cyber as a high-impact tool rather than a general-purpose product. Based on the application details, Cyber appears to be designed to assist with tasks such as penetration testing, vulnerability discovery, exploitation analysis, and malware reverse engineering. In practical terms, this means the tool may help security teams find weaknesses before attackers do, test whether their defenses are strong enough, and better understand malicious software.

For American companies, this could be extremely valuable. Cyberattacks continue to affect organizations of every size, from small businesses to major corporations. Ransomware, phishing campaigns, supply chain attacks, and data breaches have become constant threats. A tool that can speed up security testing and help defenders respond faster could provide a meaningful advantage. However, that same power also explains why OpenAI is limiting access.

The main concern is dual use. Many cybersecurity tools can be used for both defense and offense. A penetration testing system can help a company secure its network, but it can also help a criminal search for weaknesses. Malware analysis can support defensive research, but similar capabilities could help bad actors improve malicious code. This is why OpenAI appears to be taking a cautious approach, even though Altman recently criticized Anthropic for doing something similar.

Anthropic’s cybersecurity tool, Mythos, was also released to a restricted set of users. Altman previously described Anthropic’s strategy as fear-based marketing, suggesting that the company may have been using safety concerns as part of a public relations message. Some critics agreed with that view and argued that Anthropic’s warnings sounded exaggerated. However, reports that an unauthorized group allegedly gained access to Mythos added weight to the concern that these systems can attract misuse attempts.

Now, OpenAI is facing the same challenge. By limiting access to GPT-5.5 Cyber, the company is effectively acknowledging that powerful AI cybersecurity tools require safeguards. The decision may look contradictory when compared with Altman’s earlier criticism of Anthropic, but it also reflects the difficult reality of this technology. AI companies want to promote innovation, but they also need to prevent tools from being used in ways that could damage businesses, public services, or national security.

The United States has a special stake in this issue. American organizations are frequent targets of cyberattacks, and the country’s economy relies heavily on digital infrastructure. Banks, hospitals, energy providers, defense contractors, cloud platforms, universities, and local governments all face constant pressure from cyber threats. If AI can help trained defenders detect risks more quickly, it could improve national resilience. At the same time, if access is too open, the same technology could increase the speed and scale of cybercrime.

OpenAI has stated that it is working with the U.S. government and trying to identify more users with legitimate cybersecurity credentials. This suggests that the company may gradually expand access as it develops stronger screening, monitoring, and safety systems. A phased release could allow OpenAI to collect feedback from trusted professionals while reducing the chance of abuse during the early stage.

From an industry perspective, this move may also influence how other AI companies release sensitive tools in the future. The AI sector has often promoted open access and rapid deployment, but cybersecurity may require a different model. Unlike a chatbot used for writing assistance or customer support, an AI system capable of vulnerability exploitation or malware reverse engineering carries more direct security implications. Companies may increasingly rely on approval systems, identity verification, usage monitoring, and partnerships with government agencies before launching similar products.

For cybersecurity professionals, the arrival of tools like GPT-5.5 Cyber and Mythos could represent a major shift in daily work. Security teams are often overwhelmed by alerts, patching demands, vulnerability reports, and incident response tasks. AI could help automate repetitive work, summarize technical findings, generate test cases, and support faster analysis. Smaller companies that lack large security teams may eventually benefit from these capabilities as well, provided access becomes broader and safer.

However, AI will not replace human judgment in cybersecurity. These tools can assist with analysis, but they still need expert oversight. A vulnerability report must be interpreted correctly. A penetration test must be authorized and controlled. Malware reverse engineering must be handled carefully and legally. The human professional remains essential because cybersecurity is not only a technical field; it also involves ethics, risk management, compliance, and accountability.

The controversy surrounding OpenAI and Anthropic highlights a larger tension in the AI race. Companies want to lead the market, criticize competitors, and present their own approach as better. But when they build tools with serious security implications, they often arrive at similar conclusions: access must be controlled, at least at first. That does not necessarily mean restriction is wrong. It may mean that the industry is beginning to understand the responsibility that comes with releasing advanced cyber capabilities.

For readers in the United States, the key takeaway is that AI-powered cybersecurity is entering a new phase. The technology is no longer limited to research labs or future predictions. It is being prepared for real-world use by selected defenders. The question now is how companies like OpenAI and Anthropic can balance innovation with safety, speed with responsibility, and public benefit with risk prevention.

If OpenAI succeeds, GPT-5.5 Cyber could become an important tool for strengthening American cyber defense. If access control fails, or if similar tools are misused, the consequences could be serious. The next few months may show whether restricted rollouts can provide a responsible path forward for powerful AI security systems.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment