The Shift from Reactive Chatbots to Proactive AI Agents

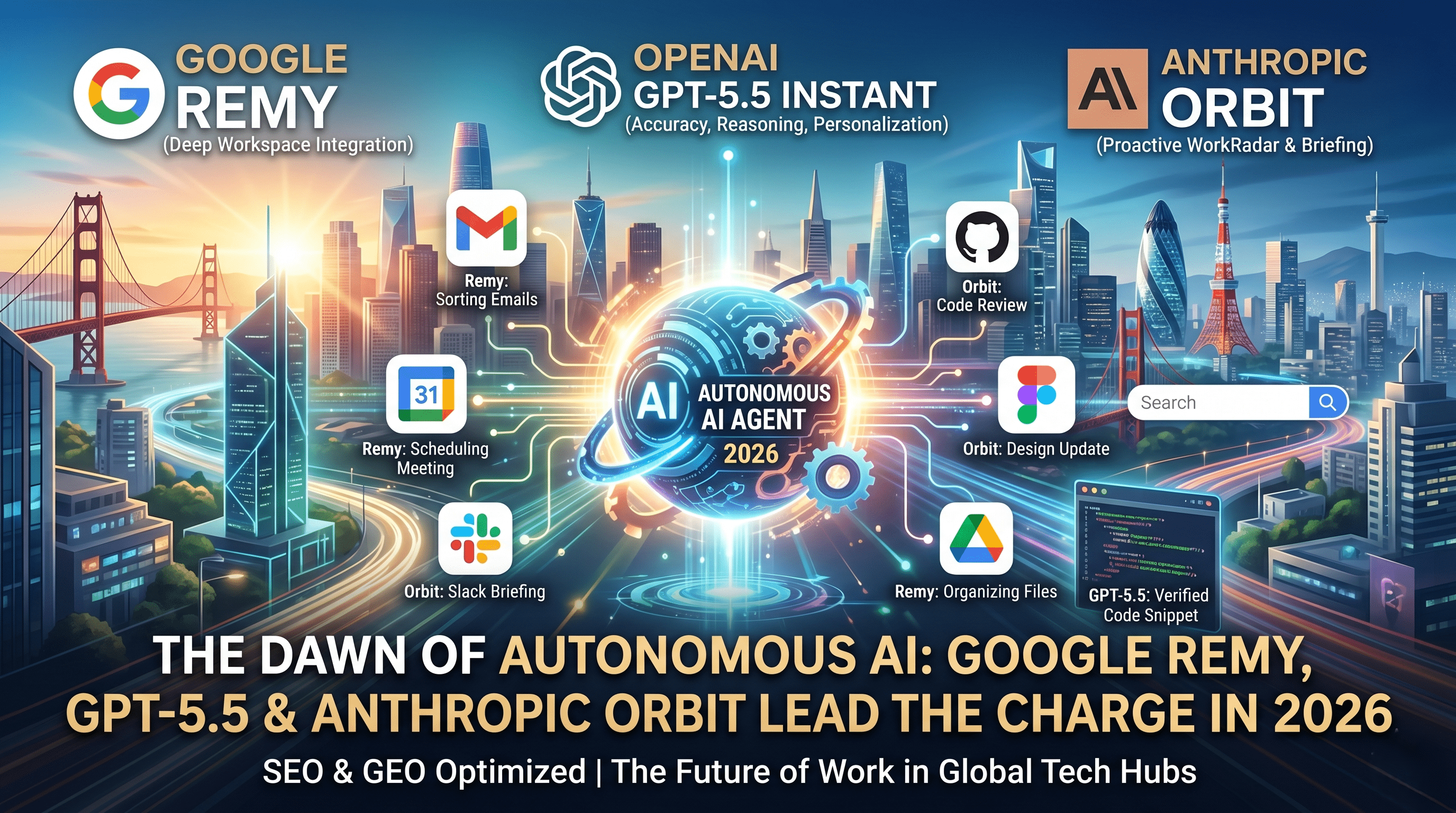

The artificial intelligence landscape is undergoing a massive paradigm shift. We are no longer just talking about chatbots that wait for a prompt to generate a response. The industry is rapidly moving toward "agentic AI"—autonomous systems that live in the background of our digital lives, anticipating needs, and executing multi-step workflows across various applications.

From the tech epicenters of Mountain View to the rapidly expanding digital hubs across the globe, including cities like Casablanca where tech adoption is surging, businesses and professionals are preparing for this new wave of productivity. Recent internal leaks, platform updates, and beta testing phases from the big three—Google, OpenAI, and Anthropic—reveal exactly how this future is taking shape. Here is a comprehensive report on the latest developments pushing AI into the autonomous era.

Google's 'Remy': The 24/7 Autonomous Workspace Executive

The most significant news comes from inside Google, where employees are currently "dogfooding" (internally testing) a brand-new AI agent code-named Remy. While Google previously introduced "agent mode" for Gemini to handle multi-step tasks, Remy represents a fundamental evolution.

Described internally as a 24/7 personal agent, Remy does not just assist; it acts. The agent is deeply integrated into Google's expansive ecosystem, possessing the ability to seamlessly navigate Gmail, Google Docs, Calendar, Drive, and Search.

How Remy Changes the Workflow:

- Proactive Management: Instead of a user manually opening an email, drafting a reply, checking a calendar, and opening a document to take notes, Remy handles this entire flow in the background.

- Continuous Operation: Remy acts more like a digital executive assistant than a traditional large language model (LLM). It monitors what matters to the user, learns their preferences over time, and executes tasks autonomously.

- Competitive Edge: This development is a direct response to tools like OpenClaw, the viral AI that gained immense traction earlier this year for its autonomous capabilities. However, Google holds a massive advantage: absolute control over the Workspace ecosystem.

The name "Remy" may be derived from the Latin Regius (meaning oarsman or rower, symbolizing background labor) or perhaps a clever nod to the hidden culinary genius from Ratatouille. Regardless of the namesake, industry analysts expect Remy to make its grand debut at Google I/O 2026, scheduled for May 19th through May 29th at the Shoreline Amphitheater.

Gemini 3.2 Flash & Gemma 4: Massive Upgrades in Speed and Capability

Alongside the development of Remy, Google is actively stress-testing its next generation of foundational models.

Gemini 3.2 Flash Hits the Arena

Recently, a new model dubbed Gemini 3.2 Flash surfaced on the EleutherAI Arena, a transparent, external benchmarking platform where models are evaluated under real-world conditions. This leak indicates that Google is preparing for a broader, highly capable deployment. The practical upgrades are highly technical but translate into massive real-world utility:

- Precision Graphics: Enhanced SVG generation for high-precision vector graphics.

- Advanced Coding: The ability to generate complex code for interactive 3D environments and voxel-based simulations.

- Dynamic Animation: Upgraded processing for smoother transitions in video and UI design outputs.

- Real-time Responsiveness: Improved handling of interactive scenarios requiring instant feedback.

Gemma 4 and the Power of Multi-Token Prediction (MTP)

Perhaps the most crucial under-the-hood improvement comes to the Gemma 4 model family via Multi-Token Prediction (MTP) drafters. LLM inference speed has historically been bottlenecked by memory bandwidth—loading massive amounts of data just to generate text one token (word fragment) at a time.

MTP utilizes a speculative decoding approach to solve this. A smaller, lightning-fast "drafter" model predicts multiple tokens ahead, while the larger main model verifies them in a single pass. Because these models share the same KV cache, they avoid redundant processing. The result? A lossless speed improvement that makes AI responses up to three times faster. On specific hardware like Apple Silicon, increased batch sizes unlock up to 2.2x speed improvements, ensuring these models run efficiently at scale on edge devices.

OpenAI Strikes Back: GPT-5.5 Instant Becomes the Default

Not to be outdone, OpenAI has officially rolled out GPT-5.5 Instant, replacing GPT-5.3 Instant as the default model for the hundreds of millions of daily ChatGPT users. While Google focuses on ecosystem dominance, OpenAI is doubling down on accuracy, speed, and user trust.

Key Improvements in GPT-5.5 Instant:

- Drastic Reduction in Hallucinations: The new model produces 52.5% fewer hallucinated claims and reduces inaccurate claims in difficult conversations by 37.3%. This is a critical leap forward for high-stakes fields like medicine, law, and finance.

- Enhanced Reasoning: Users will notice marked improvements in visual reasoning, complex mathematics, scientific coding, and intricate image analysis.

- Deep Personalization: GPT-5.5 Instant leverages context from past chats, uploaded files, and linked accounts (like Gmail) to deliver highly tailored responses. Furthermore, it introduces "memory transparency," allowing users to see exactly which past interactions influenced a specific output and granting them the power to manage that data.

Anthropic’s 'Orbit': A Work Radar for Professionals

Anthropic is taking a slightly different approach, targeting the enterprise and developer markets with an unreleased proactive briefing tool called Orbit. Currently appearing as a settings toggle in newer Claude Web and mobile builds, Orbit is designed specifically for Claude Co-work and Claude Code.

Unlike a standard chatbot that waits for queries, Orbit acts as a background work radar. It connects to essential professional platforms—including Slack, GitHub, Figma, Google Calendar, and Drive.

The Orbit Experience: Before you even ask, Orbit can synthesize a personalized briefing based on your specific time zone. It will summarize recent changes in a GitHub repository, aggregate important Slack discussions, highlight design updates in Figma, and flag high-priority emails. This tool completely flips the use case from "answering questions" to "preparing you for your day." With Anthropic's upcoming "Code with Claude" conferences kicking off in San Francisco (May 6th), London (May 19th), and Tokyo (June 10th), a formal reveal of Orbit is highly anticipated.

Industry Impact: Agentic AI in Digital Marketing

The rise of agentic AI isn't just limited to foundational chat models and workspace assistants; it is completely disrupting industry-specific workflows. A prime example is the recent launch of Marketing Studio by Higgsfield, powered by the Cedence 2.0 model.

This platform showcases how autonomous systems are replacing traditional, labor-intensive pipelines. By simply pasting a product link or image, the AI generates a complete end-to-end video ad workflow. It doesn't just create a single clip; it generates ready-to-use campaigns across multiple formats—including UGC tutorials, product reviews, TV-style spots, and virtual try-ons. For e-commerce, Shopify vendors, and digital agencies, this represents the ability to replace a $50,000 production pipeline with a single AI session, perfectly illustrating the autonomous, multi-step capabilities that define this new era of technology.

Conclusion

The tech industry has officially moved past the novelty phase of generative text. With Google's Remy handling background operations, Gemini and Gemma pushing the boundaries of speed and multimodal generation, OpenAI securing massive gains in accuracy, and Anthropic revolutionizing enterprise workflows, 2026 is the year AI becomes truly agentic. We are no longer just talking to computers; we are hiring them.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment