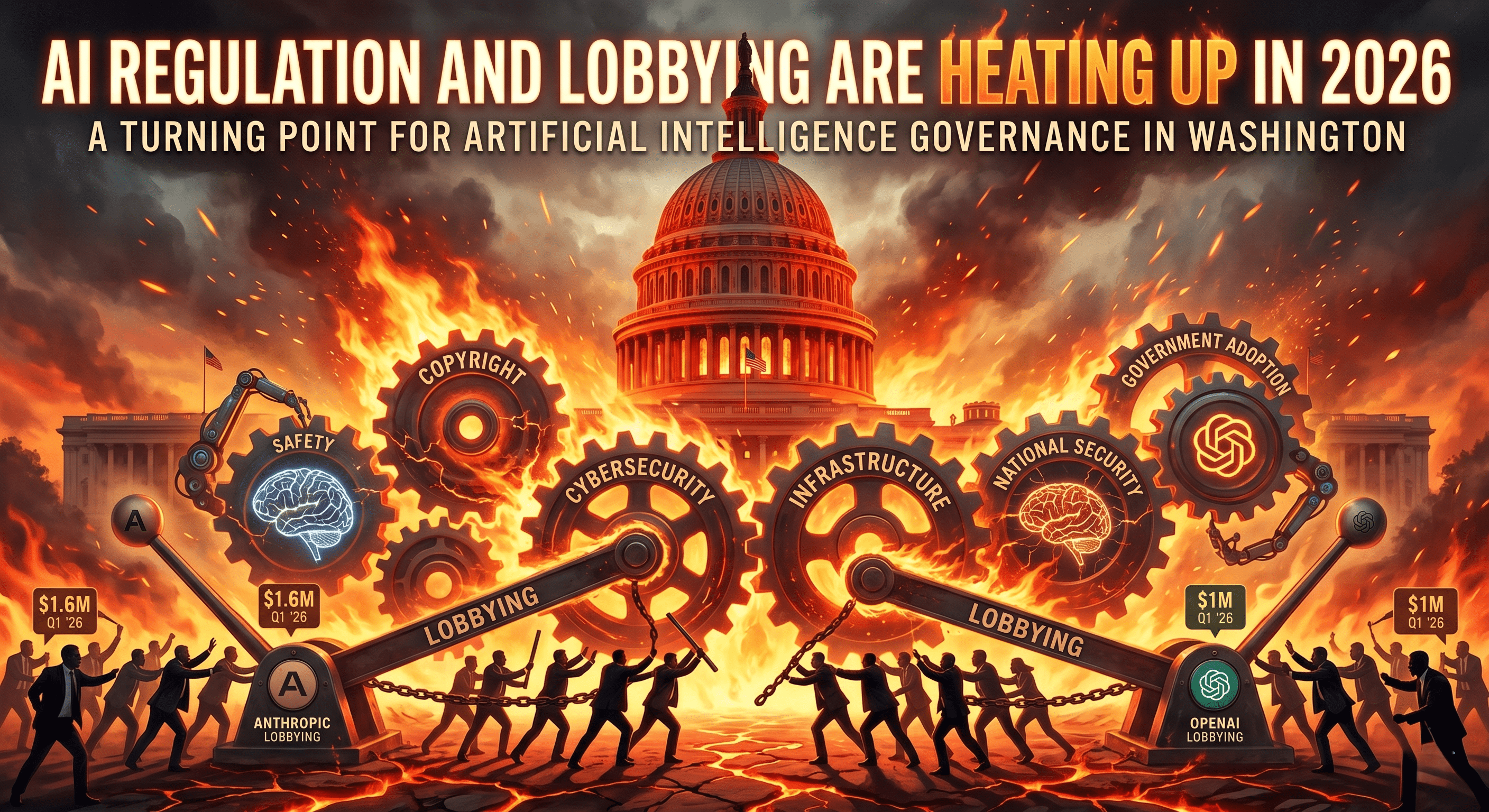

AI Regulation and Lobbying Are Heating Up in 2026

AI regulation and lobbying are becoming central issues in 2026 as major artificial intelligence companies increase their presence in Washington. Anthropic and OpenAI both posted their biggest lobbying quarters ever in Q1 2026, signaling that the AI industry is preparing for a more regulated future.

According to reported lobbying figures, Anthropic spent approximately $1.6 million in Q1 2026, while OpenAI spent around $1 million during the same period. These numbers reflect growing pressure around key policy areas, including AI safety, copyright, cybersecurity, infrastructure, national security, and government adoption of AI tools.

The message is clear: artificial intelligence is no longer just a technology issue. It is now a major political, economic, legal, and security issue.

Why AI Lobbying Is Increasing in 2026

The rise in AI lobbying comes at a time when governments are trying to understand how to regulate fast-moving AI systems without slowing innovation. Companies like OpenAI and Anthropic are building powerful models that can write code, analyze documents, generate media, assist with research, and automate workplace tasks.

As these systems become more capable, policymakers are asking harder questions:

- How safe are advanced AI models?

- Who is responsible when AI causes harm?

- Should AI companies be required to disclose training data?

- How should copyright law apply to AI-generated content?

- Can AI systems be used securely by federal agencies?

- How much energy and infrastructure should be dedicated to AI data centers?

- What role should the government play in AI development?

For AI companies, lobbying is a way to influence the answers to these questions before new rules are finalized.

OpenAI and Anthropic Are Taking Different but Related Policy Paths

OpenAI and Anthropic are two of the most influential AI companies in the world. Both companies are deeply involved in the development of advanced generative AI systems, but they often emphasize different parts of the AI policy conversation.

OpenAI is closely associated with commercial AI adoption, consumer AI tools, enterprise AI products, and the future of artificial general intelligence. Its policy interests often include AI safety, innovation, copyright, national competitiveness, and public-sector AI adoption.

Anthropic, known for its Claude AI models, has positioned itself strongly around AI safety, responsible deployment, and risk management. Its lobbying efforts reflect concerns about frontier model safety, cybersecurity, and how advanced AI should be governed.

Even if their messaging differs, both companies have the same basic challenge: they want governments to regulate AI in a way that protects the public while still allowing leading AI firms to build, deploy, and compete globally.

The Main Issues Driving AI Regulation and Lobbying

1. AI Safety

AI safety is one of the biggest reasons lobbying is increasing. Advanced AI models are becoming more powerful, and regulators want to know whether companies are testing these systems carefully before release.

Key AI safety questions include:

- Should advanced models require safety evaluations before launch?

- Should companies report dangerous capabilities to the government?

- Who should audit AI systems?

- What standards should apply to high-risk AI?

AI companies want to help shape these rules because safety requirements could affect product timelines, research priorities, and competitive advantage.

2. Copyright and Training Data

Copyright remains one of the most controversial AI policy issues. Many AI models are trained on large datasets that may include books, articles, images, music, code, and other copyrighted material.

Creators, publishers, and media companies are asking whether AI firms should pay for using copyrighted works in training data. AI companies, meanwhile, argue that model training can fall under existing legal frameworks or that new licensing markets may be needed.

This issue matters because copyright rules could reshape the economics of AI. If AI firms are required to license more data, the cost of building models may rise sharply.

3. Cybersecurity and National Security

AI is increasingly tied to cybersecurity. Advanced models can help defenders detect threats, summarize security alerts, and write safer code. But they can also be misused to generate phishing emails, automate hacking workflows, or assist with malware development.

That creates a major policy challenge: governments want to encourage beneficial AI use while preventing malicious use.

Lobbying around cybersecurity may influence:

- AI model access restrictions

- Reporting requirements for dangerous capabilities

- Export controls on advanced AI systems

- Government procurement rules

- Security standards for AI developers

As AI becomes part of national security strategy, lobbying from leading AI companies will likely become even more intense.

4. AI Infrastructure and Energy Demand

AI development requires massive computing power. That means more data centers, more chips, more electricity, and more cloud infrastructure.

Infrastructure is becoming a policy issue because AI growth affects:

- Energy grids

- Water usage

- Semiconductor supply chains

- Data center permitting

- Cloud computing competition

- National AI competitiveness

AI companies are lobbying not only on software regulation but also on the physical infrastructure needed to power AI systems. In 2026, AI policy is increasingly connected to energy policy and industrial strategy.

5. Government Use of AI

Governments are also becoming major AI customers. Agencies may use AI for document review, citizen services, cybersecurity, logistics, research, and internal productivity.

But government AI use raises sensitive questions:

- Should federal agencies use commercial AI tools?

- How should sensitive government data be protected?

- Can AI be used in defense, immigration, healthcare, or law enforcement?

- What transparency should citizens receive when AI is involved in decisions?

AI firms want to shape procurement rules and safety standards because government contracts could become a major source of revenue and influence.

What This Means for Businesses

For businesses, the rise in AI lobbying means AI regulation is becoming more predictable but also more complex. Companies that use AI tools should prepare for new compliance expectations.

Business leaders should watch for rules related to:

- AI transparency

- Data privacy

- Copyright risk

- Employee use of AI

- AI-generated content disclosure

- Vendor risk management

- Cybersecurity requirements

- Industry-specific AI restrictions

Organizations should not wait for final laws before building responsible AI policies. A practical AI governance plan can help reduce legal, reputational, and operational risk.

What This Means for Creators and Publishers

Creators, journalists, authors, musicians, and publishers are also directly affected by AI lobbying. Copyright policy could determine whether AI companies must pay for training data, whether creators can opt out of model training, and how AI-generated content is labeled.

If AI companies successfully shape copyright rules in their favor, creators may have fewer protections. If creator groups and publishers gain more influence, AI companies may face higher licensing costs and stricter data rules.

This is one of the most important policy battles in the AI economy.

What This Means for Consumers

For everyday users, AI regulation could affect the tools they use at work, school, and home. Stronger regulation may lead to safer AI products, clearer disclosures, and better privacy protections.

However, stricter rules could also slow product launches or limit access to certain AI features.

Consumers should expect more public debate around:

- AI-generated misinformation

- Deepfakes

- Data privacy

- AI bias

- Children’s use of AI

- AI in education

- AI in healthcare

- AI replacing human jobs

The outcome of today’s lobbying efforts could shape how AI appears in daily life for years to come.

Why Q1 2026 Matters

The fact that Anthropic and OpenAI posted their biggest lobbying quarters in Q1 2026 is important because it shows that AI companies are no longer reacting to regulation — they are actively preparing for it.

This spending suggests that leading AI firms expect major policy decisions soon. Whether the issue is safety testing, copyright, cybersecurity, infrastructure, or federal AI procurement, companies want a seat at the table.

In many ways, 2026 may become a turning point for AI governance. The industry is moving from rapid experimentation into a more mature phase where legal frameworks, compliance systems, and government relationships matter as much as technical progress.

The Bigger Picture: AI Is Becoming a Regulated Industry

The AI industry is beginning to look more like other heavily regulated sectors, such as finance, healthcare, telecommunications, and energy. These industries all depend on government rules, public trust, compliance standards, and lobbying.

AI may follow a similar path.

That does not mean innovation will stop. But it does mean the most successful AI companies may not simply be the ones with the best models. They may also be the companies that best understand regulation, safety, infrastructure, and public policy.

OpenAI and Anthropic’s rising lobbying spending is a sign of that shift.

Conclusion

AI regulation and lobbying are heating up in 2026 because artificial intelligence has become too powerful, too valuable, and too politically important to remain lightly governed. With Anthropic spending about $1.6 million and OpenAI spending about $1 million in Q1 2026, the leading AI companies are making it clear that policy is now part of their core strategy.

The biggest debates ahead will focus on AI safety, copyright, cybersecurity, infrastructure, and government use. Businesses, creators, consumers, and policymakers should pay close attention, because the rules being shaped now may define the future of AI for the next decade.

FAQ: AI Regulation and Lobbying in 2026

Why are AI companies increasing lobbying spending?

AI companies are increasing lobbying spending because governments are preparing new rules for artificial intelligence. These rules may affect safety testing, copyright, cybersecurity, infrastructure, and how government agencies use AI.

How much did Anthropic and OpenAI spend on lobbying in Q1 2026?

According to reported figures, Anthropic spent approximately $1.6 million on lobbying in Q1 2026, while OpenAI spent around $1 million.

What are the biggest AI regulation issues in 2026?

The biggest AI regulation issues include AI safety, copyright and training data, cybersecurity, national security, data privacy, infrastructure, energy use, and government adoption of AI systems.

How could AI regulation affect businesses?

AI regulation could require businesses to improve AI governance, disclose AI use, protect customer data, manage copyright risk, and ensure that AI tools are used safely and responsibly.

Will AI regulation slow innovation?

AI regulation may slow some product releases or increase compliance costs, but it could also build public trust and create clearer rules for responsible AI development.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment